Einsteigerguide: Einführung in Mixed Reality.

Virtual (VR), Augmented (AR) und Mixed Reality (MR) sind alles Begriffe, die in vielen Szenarien austauschbar verwendet werden. Aber sie haben beim Verständnis dieser Technologien von Natur aus unterschiedliche Bedeutungen.

In diesem Beitrag werden wir die folgenden Inhalte thematisieren:

- Unterschiede zwischen VR, AR und MR

- Displays und einige der verwendeten Tracker

- Verarbeitungstechniken und Repräsentation

- Microsoft HoloLens

- Entwicklung von Apps für Windows Mixed Reality

Virtual Reality.

VR zielt darauf ab, dass Sie sich vollständig in eine andere Welt versunken fühlen und alles andere ausblenden. Es ist eine totale virtuelle Umgebung, in der nichts von der Realität sichtbar ist. Der Benutzer befindet sich in einem völlig anderen Raum als der tatsächliche Standort. Der Raum ist entweder computergeneriert oder aufgenommen und verschließt vollständig die tatsächliche Umgebung des Benutzers.

VR Technologien verwenden in der Regel kompakte, opake Head-Mounted-Displays.

Augmented Reality.

AR ist jede Art von Computertechnologie, die Daten über ihre aktuelle Sicht überlagert, während Sie weiterhin die Welt um Sie herum sehen können. Neben den visuellen Daten können Sie auch den Klang verbessern. Eine GPS-App, die ihnen beim Gehen eine Wegbeschreibung mitteilt, könnte technisch gesehen eine AR-App sein. Augmented Reality ist die Integration von digitalen und computergenerierten Informationen mit der realen Umgebung in Echtzeit. Im Gegensatz zur Virtual Reality, die eine komplette künstliche Umgebung schafft, nutzt Augmented Reality die bestehende reale Umgebung und überlagert neue Informationen.

Augmented Reality Headsets überlagern Daten, 3D-Objekte und Videos in ihren ansonsten natürliche Sicht. Die Objekte müssen nicht unbedingt reale Emulationen sein. Wenn Sie beispielsweise einen Text in einer Vision bewegen, ist der Text keine Emulation eines realen Objekts, sondern eine nützliche Information.

Mixed Reality.

Mixed Reality erweitert die reale Welt um virtuelle Objekte, als ob sie wirklich in der realen Umgebung platziert wären. Die virtuellen Objekte verriegeln ihre Positionen entsprechend ihren realen Gegenstücken, um eine nahtlose Ansicht zu erzeugen (z.B. indem sie eine virtuelle Katze oder einen Ball auf einen realen Tisch legen und die Katze oder den Ball auf dem Tisch liegen lassen, während wir um den Tisch herumgehen und ihn aus verschiedenen Winkeln betrachten). Mixed Reality ist alles, was zwischen völlig realen Projektionen und vollständig virtuellen Projektionen liegt.

Mixed Reality arbeitet auf eine nahtlose Integration von Augmented Reality mit ihrer Wahrnehmung der realen Welt hin. Das heißt, „reale“ Entitäten mit „virtuelle“ Entitäten zu verknüpfen.

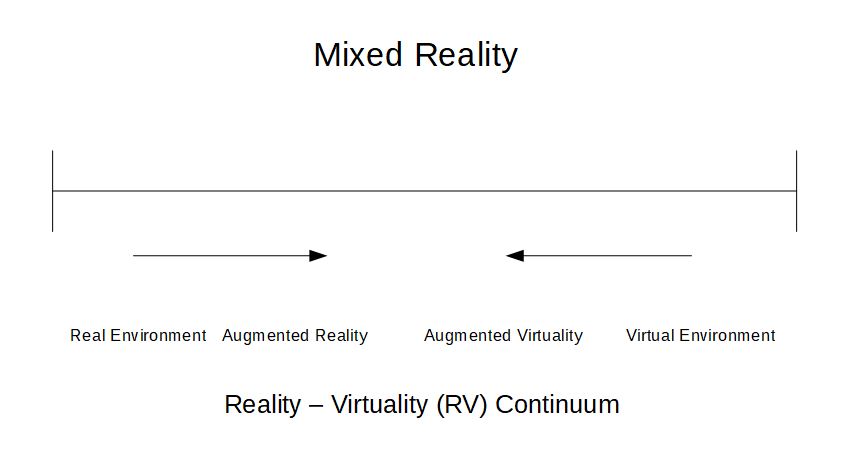

In der Forschungsarbeit von Paul Milgram und Fumio Kishino aus dem Jahr 1994 mit dem Titel „A Taxonomy of Mixed Reality Visual Displays“ erscheint eine der ersten Referenzen von Mixed Reality. Sie definieren „das Virtuitätskontinuum“, auch bekannt als „Reality-Virtuality (RV) Continuum“.

Real Environment beschreibt Ansichten oder Umgebungen, die nur reale Dinge enthalten, es enthält keine computergenerierten Elemente. Dazu gehört, was durch eine herkömmliche Videodarstellung einer realen Szene beobachtet wird und die direkte Betrachtung derselben realen Szene durch oder ohne ein einfaches Glas. Virtuelle Umgebung beschreibt Ansichten oder Umgebungen, die nur virtuelle oder computergenerierte Objekte enthalten. Die gesamte Umgebung ist virtuell geschaffen und enthält kein reales Objekt, das direkt oder durch eine Kamera betrachtet wird. Eine computergrafische Simluation eines Flugzeugs ist ein Beispiel für eine virtuelle Umgebung. Mixed Reality ist definiert als eine Umgebung, in der reale und virtuelle Weltobjekte in einem einzigen Display präsentiert werden. Es liegt irgendwo zwischen den vollständig realen und vollständig virtuellen Umgebungen, d.h. dem Extrema des Virtualitätskontinuums.

Augmented Reality ist der Ort, an dem virtuelle Objekte in die reale Welt gebracht werden, wie ein Heads-Up-Display (HUD) in einer Flugwindschutzscheibe.

Augmented Virtuality bezieht sich auf Umgebungen, in denen eine virtuelle Welt bestimmte Elemente der realen Welt enthält, wie z.B. ein Bild von der Hand in einer virtuellen Umgebung oder die Möglichkeit, andere Personen im Raum innerhalb der virtuellen Umgebung zu sehen.

Klassifizierung besierend auf Display-Umgebungen für MR.

- Absolut reale Videoanzeigen wie Computermonitore, auf denen softwaregenerierte Grafiken oder Bilder digital überlagert werden.

- Videoanzeigen mit immersiven Head Mounted Displays (HMDs), aber ähnlich wie Monitore, werden die computergenerierten Bilder elektronisch oder digital überlagert.

- Head-Mounted Displays mit Durchsichtsfunktion. Dabei handelt es sich um teilweise immersive HMDs, bei denen die computergenerierten Szenen oder Grafiken optisch den realen Szenen überlagert werden.

- Head Mounted Displays ohne Durchsichtsfunktion, aber die reale, unmittelbare Außenwelt wird auf dem Bildschirm mit Videos angezeigt, die mit Kameras aufgenommen wurden. Dieses angezeigte Video oder die Umgebung muss orthoskopisch mit der realen Außenwelt übereinstimmen. Es erzeugt einen Video-Durchblick, der einem optisch echten Durchblick entspricht.

- Vollgrafische Display-Umgebungen, denen die Video Reality hinzugefügt wird. Diese Art von Displays sind der Augmented Virtuality näher als der Augmented Reality. Sie können vollständig, teilweise oder anderweitig immersiv sein.

- Vollgrafische Umgebungen mit realen, physischen Objekten, die mit der computergenerierten virtuellen Umgebung verbunden sind. Dies sind teilweise immersive Umgebungen. Ein Beispiel ist ein vollständig virtueller Raum, in dem die physische Hand des Benutzers verwendet werden kann, um einen virtuellen Türknauf zu greifen.

Displays, die für Mixed Reality verwendet werden.

Monitore: Herkömmliche Computermonitore oder andere Display-Geräte wie Fernseher usw.

Handheld-Geräte: Dazu gehören Mobiltelefone und Tablets mit Kamera. Die reale Szene wird durch die Kamera aufgenommen und die virtuelle Szene dynamisch hinzugefügt.

Head-Mounted Displays: Dies sind Display-Geräte, die auf dem Kopf des Benutzers montiert werden können, wobei das Display vor den Augen schwebt. Diese Vorrichtungen setzen Sensoren für sechs Freiheitsgrade ein, die virtuelle Informationen überwachen und dem System ermöglichen, virtuelle Informationen an die physische Welt und sich damit an die Kopfbwegungen des Benutzers anzupassen. VR-Headsets und Microsoft HoloLens sind Beispiele.

Brillen: Diese Geräte werden wie eine normale Brille getragen. Die Brille kann Kameras enthalten, um die reale Weltansicht abzufangen und die erweiterte Sicht auf die Augenteile anzuzeigen. Bei einigen Geräten wird das AR-Bild durch die Linsenteile des Glases projiziert oder von diesen reflektiert. Google Glass und dergleichen sind Beispiele für solche Displays.

Head-Up Displays: Das Display befindet sich in Augenhöhe und der Benutzer muss den Blick nicht bewegen, um die Informationen zu erfassen. Ein Beispiel sind Informationen, die auf die Windschutzscheiben oder Autos und Flüge projiziert werden.

Kontaktlinse: In naher Zukunft werden diese ICs, LEDs und Antennen für drahtlose Kommunikation beinhalten.

Virtuelles Retina Display: Bei dieser Technologie wird ein Display direkt auf die Netzhaut des menschlichen Auges gescannt.

Räumliches Augmented Reality: SAR verwendet mehrere Projektoren, um virtuelle oder grafische Informationen auf realen Objekten anzuzeigen. Bei SAR ist die Anzeige nicht an einen einzelnen Benutzer gebunden. Daher kann es auf Gruppen von Benutzern skaliert werden, was eine kollokierte Zusammenarbeit zwischen den Benutzern ermöglicht.

Tracker zur Definition der Mixed Reality Szene.

Digitalkameras und andere optische Sensoren: Wird verwendet, um Videos und andere optische Informationen der realen Szene aufzunehmen.

Beschleunigungssensoren: Wird verwendet, um die Bewegung, Geschwindigkeit und Beschleunigung der Vorrichtung in Bezug auf andere Objekte in der Szene zu berechnen.

GPS: Wird verwendet, um die Geoposition des Geräts zu identifizieren, die wiederum verwendet werden kann, um der Szene ortsspezifische Eingänge zur Verfügung zu stellen.

Gyroskope: Dient zum Messen und Aufrechterhalten der Ausrichtung und Winkelgeschwindigkeit des Geräts.

Festkörper-Kompasse: Wird verwendet, um die Richtung des Geräts zu berechnen.

RFID oder Radiofrequenz-Identifikation: Funktioniert durch das Anbringen von Tags an Objekten in der Szene, die vom Gerät per Funk gelesen werden können. RFIDs können auch dann gelesen werden, wenn sich der Tag nicht im Sichtfeld befindet und mehrere Meter entfernt ist.

Andere drahtlose Sensoren werden auch zur Verfolgung von Geräten im realen/virtuellen Raum eingesetzt.

Techniken zur Verarbeitung von Szenen und Darstellungen.

Image Registration.

Registrierung ist der Prozess, bei dem Pixel in zwei Bildern genau mit den gleichen Punkten in der Szene übereinstimmen. Die beiden Bilder können Bilder von verschiedenen Sensoren gleichzeitig (Stereo Imaging) oder von demselben Sensor zu verschiedenen Zeiten (Remsote Sensing) sein. In einer Augmented Reality Plattform können die beiden Bilder die reale und die virtuelle Umgebung bedeuten. Diese Regsitrierung ist erforderlich, damit die Bilder kombiniert werden können, um die Informationsextraktion zu verbessern.

Image Registration verwendet verschiedene Methoden des Computer Vision, die sich mit Methoden befassen, mit denen die Computer ein hohes Maß an Verständnis für digitale Informationen wie Bilder oder Videos erreichen. Computer Vision Methoden im Zusammenhang mit dem Videotracking sind besondern nützlich in Mixed-Reality-Umgebungen.

Videotracking ist der Prozess der Lokalisierung von sich bewegenden Objekten, die mit der Zeit in einer Kamera gefangen sind. Ziel ist es, Zielobjekte auf verschiedene Videobilder abzubilden. Um dies durchzuführen, gibt es Algorithmen, die sequentielle Videobilder analysieren, die Bewegung der Zielobjekte untersuchen und diese ausgeben. Zwei Hauptbestandteile eines visuellen Tracking-Systems sind die Zieldarstellung und -lokalisierung (Identifizierung des Objekts und Bestimmung seiner Position) sowie die Filterung und Datenassoziation (Einbeziehung von Szenenvorkenntnissen, Behandlung der Dynamik des Objekts und Bewertung verschiedener Hypothesen).

Gängige Algorithmen zur Darstellung und Lokalisierung von Zielen sind:

- Kernel-basiertes Tracking – ein Lokalisierungsverfahren, das auf der Maximierung eines Ähnlichkeitsmasses basiert (realwertige Funktion, die die Ähnlichkeit zwischen zwei Objekten quantifiziert). Dies funktioniert iterativ. Diese Methode wird auch als Mean-Shift-Tracking bezeichnet.

- Contour Tracking – Diese Methoden entwickeln eine anfängliche Kontur vom vorherigen Frame bis zu ihrer neuen Position im nächsten Frame. Dies ist ein Verfahren zur Ableitung von Objektgrenzen z.B. Condensation Algorithmus.

Augmented Reality Mark-up Language (ARML).

Die ARML ist ein Datenstandard, der verwendet wird, um Augmented Reality (AR)-Szenen und deren Wechselwirkungen mit der realen Welt zu beschreiben und zu definieren. ARML wird von einer speziellen Arbeitsgruppe für ARML 2.0 Standards entwickelt. ARML ist teil des OGC (Open Geospatial Consortium). Es enthält XML-Grammatik, um die Position und das Aussehen virtueller Entitäten in der AR-Szene zu beschreiben. Es enthält auch ECMAScript-Bindungen, eine Skriptsprache, die den dynamischen Zugriff auf die Eigenschaften der virtuellen Elemente sowie die Ereignisbehandlung ermöglicht.

Da die ARML auf einem generischen Objektmodell basiert, ermöglicht sie die Serialisierung in mehreren Sprachen. Ab sofort definiert ARML die XML- und die JSON-Serialisierung für die ECMAScript-Bindungen. Das ARML-Objektmodell besteht aus den folgenden Konzepten:

Features: Dies beschreibt das physische Objekt, das zur realen Szene hinzugefügt werden soll. Das Objekt wird durch eine Reihe von Metadaten wie ID, Name, Description etc. beschrieben. Ein Feature hat einen oder mehrere Anker.

Visual Assets: Dies beschreibt das Aussehen der virtuellen Objekte in der Augmented Scene. Zu den Visual Assets, die beschrieben werden können, gehören Klartext, Bilder, HTML-Inhalte und 3D-Modelle. Sie können orientiert und skaliert werden.

Anchor: Ein Anchor beschreibt die Position des physischen Objekts in der realen Welt. Vier verschiedene Anchortypen sind Geometrien, Trackables, RelativeTo und ScreenAnchor.

Microsoft HoloLens.

HoloLens ist im Wesentlichen ein holografischer Computer, der in ein Headset integriert ist, mit dem Sie Hologramme in einer Umgebung wie einem Wohnzimmer oder einem Büro sehen, hören und interagieren können. HoloLens ist ein Windows 10 PC an sich im Gegensatz zu anderen Mixed Reality Geräten wie Google Glass, die nur Peripherie- oder Zusatzgeräte sind, die drahtlos mit einem anderen Verarbeitungsgerät verbunden werden müssen. HoloLens enthält hochauflösende Gläser und Raumklangtechnologie, um dieses immersive, interaktive holographische Erlebnis zu schaffen.

Eingaben für ein HoloLens können über Blicke, Gesten, Stimme, Gamepad und Motion Controller empfangen werden. Es empfängt auch Wahrnehmungs- und Raumfeatures wie Koordinaten, Raumklang und räumliches Mapping. Als Mixed-Reality-Geräte, einschließlich HoloLens, verwenden die bereits mit Windows verfügbaren Eingänge, einschließlich Maus, Tastatur, Gamepads und mehr. Mit HoloLens werden Hardware-Zubehörteile über Bluetooth mit dem Gerät verbunden.

Für Mixed Reality entwickeln.

Windows 10 OS ist von unten nach oben aufgebaut, um mit Mixed-Reality-Geräten kompatibel zu sein. Die für Windows 10 entwickelten Apps sind daher mit mehreren Geräten kompatibel, darunter HoloLens und andere immersive Headsets. Welche Umgebungen für die Entwicklung von MR-Geräten verwendet werden, hängt von der Art der App ab, die wir erstellen möchten.

Für 2D-Anwendungen können wir jedes Werkzeug verwenden, mit dem universelle Windows-Anwendungen entwickelt werden, die für alle Windows-Umgebungen geeignet sind (Windows Phone, PC, Tablets etc.). Diese Apps werden als 2D-Projektionen erlebt und können über mehrere Gerätetypen hinweg funktionieren.

Aber die immersiven und holographischen Anwendungen benötigen Tools, die die Vorteile der Windows Mixed Reality API nutzen. Visual Studio kann bei der Erstellung solcher Anwendungen auf 3D-Entwicklungswerkzeuge wie Unity 3D zurückgreifen. Wenn Sie daran interessiert sind, eine eigene Engine zu entwickeln, können wir DirectX und andere Windows-APIs verwenden.

Universelle Windows-Plattform-Anwendungen, die aus Unity exportiert werden, laufen auf jedem Windows 10-Gerät. Aber für HoloLens sollten wir die Vorteile von Funktionen nutzen, die nur bei HoloLens verfügbar sind. Um dies zu erreichen, müssen wir die TargetDeviceFamily auf „Windows.Holographic“ in der Datei Package.appxmanifest in Visual Studio einstellen. Die so entstandene Lösung kann auf dem HoloLens Emulator ausgeführt werden.

Der Blick ist, wie der Fokus auf Hologramme gelegt wird. Es ist die Mitte des Sichtfeldes, wenn ein Benutzer durch die HoloLens schaut und ist im Wesentlichen ein Mauszeiger. Dieser Cursor kann individuell für ihre App entwickelt werden. HoloLens verwendet die Position und Ausrichtung des Kopfes ihres Benutzers, nicht seiner Augen, um seinen Blickvektor zu bestimmen. Sobald das Objekt mit Blick anvisiert wird, können Gesten für die eigentliche Interaktion genutzt werden. Die häufigste Geste ist der „Tap“, der wie ein Linksklick funktioniert. „Tap and Hold“ kann verwendet werden, um Objekte im 3D-Raum zu bewegen. Ereignisse können auch mit benutzerdefinierten Sprachbefehlen ausgelöst werden. RayCast, GestureRecognizer, KeywordRecognizer sind einige der Objekte und OnSelect, OnPhraseRecognized, OnCollisionEnter, OnCollisionStay und OnCollisionExit sind einige der nützlichen Event-Handler, die in den Entwicklungsumgebungen zur Erfassung dieser Interaktionen verwendet werden können.

Warum jetzt diskutieren?

Es ist eher unmöglich, das Thema in einem kurzen Blog zu begründen, aber die Idee ist, die Türen zu den Möglichkeiten und Entwicklungsbereichen der Mixed Reality Umgebung zu öffnen und Gespräche darüber zu führen. Mixed Reality hat einen immensen Anwendungsbereich, einschließlich und nicht beschränkt auf Bereiche wie Literatur, Archäologie, Bildende Kunst, Handel, Architektur, Bildung, Medizin, Industriedesign, Flugausbildung, Militär, Notfallmanagement, Videospiele und mehr.

Für Softwareentwickler und andere Interessengruppen, die traditionelle Software für Desktop-, Mobile- und Unternehmensumgebungen entwickeln, ist die Erstellung von Apps und Erfahrungen für HoloLens und ähnliche Geräte ein Schritt ins Ungewisse. Die Art und Weise, wie wir über Erfahrungen in einem 3D-Raum denken, wird unser traditionelles Verständnis von Software- oder Anwendungsentwicklung herausfordern und verändern. Die gesamte Entwicklungsumgebung wird sich sofort, wenn nicht sogar früher, ändern.

Die Zukunft digitaler Realitäten wie Augmented und Mixed Realities erstrahlt und ein frühzeitiges Erfassen wird es und erst ermöglichen, die Vorteile der Plattform voll auszuschöpfen.

Vielen Dank für ihren Besuch.