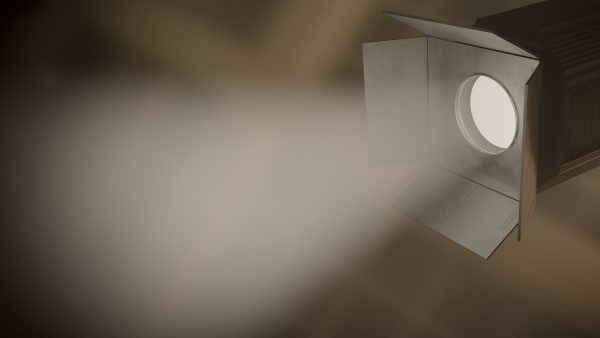

Einsteigerguide: Was ist Cone Tracing?

Cone Tracing und Beam Tracing sind eine Ableitung des Raytracing Algorithmus, welche Strahlen, die keine Dicke haben, durch dicke Strahlen ersetzt.

Dies erfolgt aus zwei Gründen:

Aus physikalischer Sicht der Transportperspektive gesehen.

Die Energie, die das Pixel erreicht, kommt, aus dem gesamten Raumwinkel, um den die Augen das Pixel in der Szene sehen, nicht aus seiner zentralen Probe. Daraus ergibt sich der Schlüsselbegriff des Pixel Footprints auf Oberflächen oder im Texturraum, der die Rückprojektion des Pixels auf der Szene ist.

Die obige Beschreibung entspricht der vereinfachten Optik der Lochkamera, die klassischerweise in der Computergrafik verwendet wird. Beachten Sie, dass dieser Ansatz auch eine objektivbasierte Kamera und damit Tiefenschärfe-Effekte darstellen kann, indem Sie einen Kegel verwenden, dessen Querschnitt von der Objektivgröße auf Null in der Fokusebene abnimmt und dann zunimmt.

Außerdem konzentriert sich ein echtes optisches System aufgrund von Diffraction und Imperfektionen nicht auf genaue Punkte. Diese kann als Point-Spread-Funktion (PSF) modelliert werden, die innerhalb eines Raumwinkels, der größer als der Pixel ist, gewichtet wird.

Aus Sicht der Signalverarbeitung.

Raytracing-Bilder leiden unter starkem Aliasing, da das „projizierte geometrische Signal“ sehr hohe Frequenzen aufweist, die über die Nyquist-Shannon-Maximalfrequenz hinausgehen, die mit der hohen Pixel Sampling Rate dargestellt werden kann, so dass das Eingangssignal Low-Pass gefiltert – d.h. über einen Raumwinkel um das Pixelzentrum integriert – werden muss.

Beachten Sie, dass der Filter im Gegensatz zur Intuition nicht der Pixel-Footprint sein sollte, da ein Boxfilter schlechte spektrale Eigenschaften hat. Umgekehrt ist die ideale Sinc-Funktion nicht praktikabel, da sie unendlichen Support und möglicherweise negative Werte hat. Ein Gaußscher oder ein Lanczos-Filter gelten als gute Kompromisse.

Computergrafik-Modelle.

Cone und Beam stützen sich auf verschiedene Vereinfachungen: Die erste betrachtet einen kreisförmigen Querschnitt und behandelt den Schnittpunkt mit verschiedenen möglichen Formen. Die zweite behandelt einen genauen pyramidenförmigen Strahl durch das Pixel und entlang eines komplexen Pfades, aber dieser funktioniert nur bei polyedrischen Formen.

Cone Tracing löst bestimmte Probleme im Zusammenhang mit Sampling und Aliasing, die herkömmliche Raytracing-Verfahren beeinträchtigen können. Cone Tracing schafft jedoch eine Vielzahl eigener Probleme. So führt beispielsweise die bloße Kreuzung eines Cones mit der Szenengeometrie zu einer enormen Vielfalt an möglichen Ergebnissen. Aus diesem Grund ist das Cone Tracing meist unbeliebt geblieben. In den letzten Jahren haben Monte-Carlo-Algorithmen wie Distributed Raytracing – d.h. stochastische explizite Integration des Pixels – viel mehr Verwendung gefunden als Cone Tracing, da die Ergebnisse exakt sind, wenn genügend Samples verwendet werden. Die Konvergenz ist jedoch so langsam, dass auch im Rahmen des Offline-Renderings viel Zeit benötigt wird, um Rauschen zu vermeiden. Neuere Arbeiten konzentrieren sich auf die Beseitigung dieses Rauschens durch maschinelle Lerntechniken.

Differentielles Cone Tracing, unter Berücksichtigung einer differentiellen Winkelnachbarschaft um einen Strahl herum, vermeidet die Komplexität genauer Geometrieschnitte, erfordert aber eine LOD-Darstellung der Geometrie und des Aussehens der Objekte. Mip Mapping ist eine Approximation, die sich auf die Integration der Oberflächenstruktur innerhalb eines Cone Footprints beschränkt. Differentielles Raytracing erstreckt sich auf strukturierte Oberflächen, die durch komplexe Pfade von Cones betrachtet werden, die von gekrümmten Oberflächen reflektiert oder gebrochen werden.

Beschleunigungsstrukturen für das Cone Tracing wie Bounding Volume Hierarchies und KD-Trees wurden von Wiche untersucht.

Vielen Dank für Ihren Besuch.